OpenAI is building an ad business and here is what that actually means.

Sam Altman spent years calling advertising a last resort. Now he's building the (third) most powerful ad targeting machine the industry has ever seen, whether he admits it or not.

Sam Altman spent years saying advertising was a last resort. Now he is building one of the most powerful ad targeting machines the industry has ever seen, right behind Google, Meta, and Amazon. Whether he admits it or not, this is happening because it has to happen to achieve profitability.

Let us go back to what he actually said. In 2024, Altman stood in front of an audience at Harvard Business School and called AI and advertising “uniquely unsettling”. He described it as a last resort business model. He was not subtle about it. Then in 2025 he hired Fiji Simo, the former Instacart CEO, and made her the CEO of Apps at OpenAI. Now we are in 2026 and OpenAI has officially announced it is testing ads inside ChatGPT for free users in the US. The last resort he mentioned two years ago is now here.

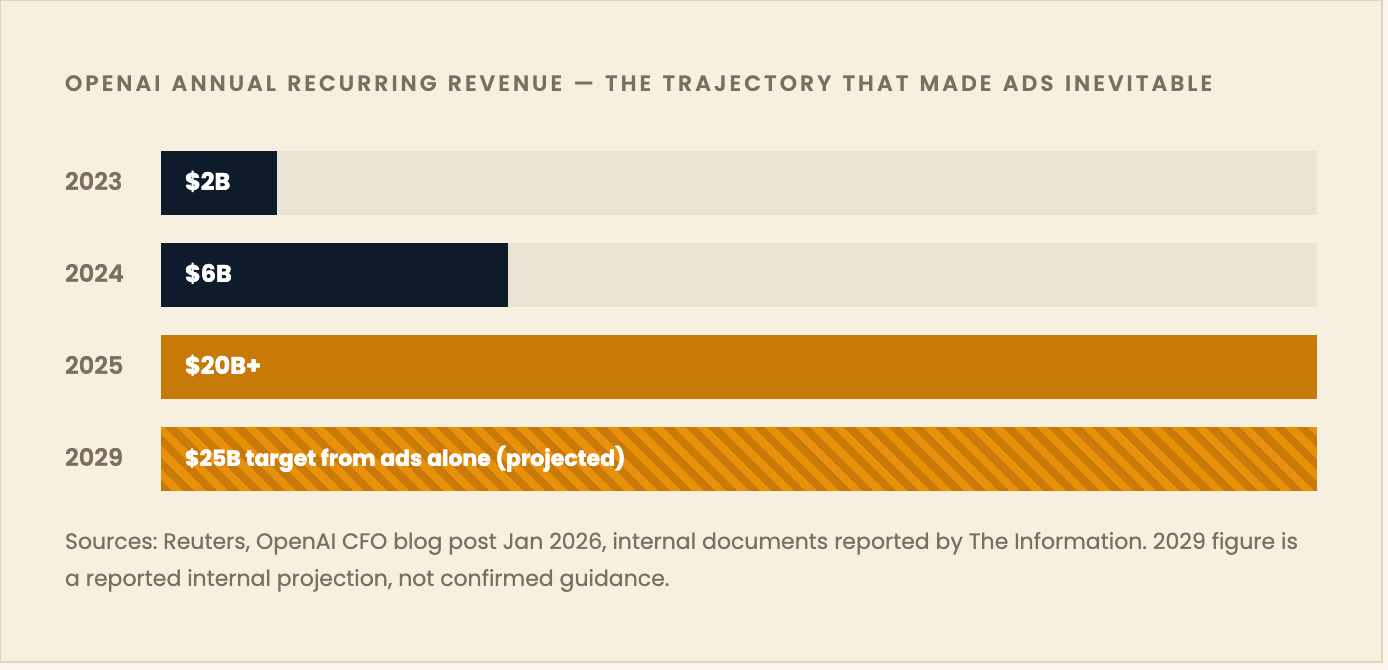

So what changed and why did this happen? ChatGPT has become the consumer product for the majority of the world. Gemini is there, Claude is there, but Claude is focused on B2B and Gemini has both B2B and B2C aspects with Google leaning into AI mode. But going back to OpenAI, they have over 800 million weekly active users and only five percent of them are paying for a subscription. Revenue went from six billion to twenty billion in a single year and it still is not enough. Eight hundred million users with five percent paying is not going to pay itself. OpenAI is running probably the biggest free service in the history of technology and the company lost approximately eight billion dollars in operating losses in just the first half of 2025. The infrastructure costs to keep the product alive and magical do not disappear just because the experience feels effortless.

The revenue picture is good but it is not self-sufficient and that is why you are seeing all of these unusual investment structures. The Stargate joint venture with SoftBank and Oracle is planning five hundred billion dollars in AI infrastructure over four years. That is definitely not a number you cover with five percent of your users paying for a subscription. So the answer is ads. That is how you pay for it.

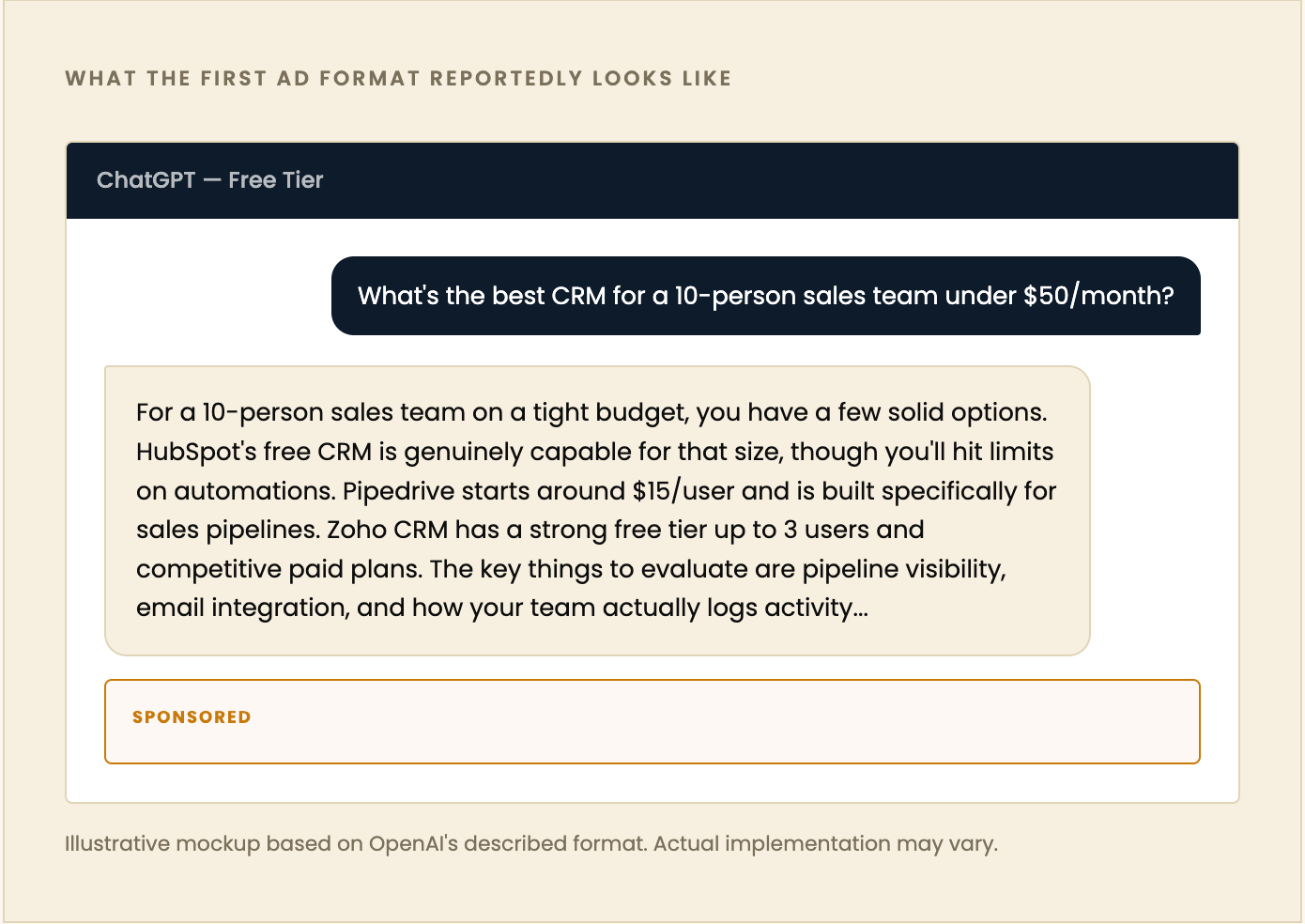

Here is what we actually know from OpenAI’s announcement. They are testing ads at the bottom of answers where there is a relevant sponsored product or service based on your current conversation. The ads will be clearly labeled. Users can dismiss them. OpenAI says it will not sell your conversation data to advertisers and will not optimize for time spent in the product. We all know that what gets said at launch and what actually happens as revenue pressure grows are two different things. This is the trust play for right now. Paid tiers including Plus, Pro, Business, and Enterprise will not include ads. The ad product is specifically designed for the ninety-five percent of users who are paying nothing.

Now here is where it gets strategically interesting. The format they announced is just the starting point. What they are actually building underneath is something far more significant and it is very different from Google Search. Not Gemini, Google Search specifically.

Google’s ad model is built on keywords. You type three words and Google infers intent from them. That inference is worth billions because it works and Google has perfected that machine over decades. But it is still just three words. Google does not know if you are a forty-year-old training for a half marathon or a college student buying their first pair of running shoes. It makes a probabilistic guess using cookies, browsing history, and demographic signals stitched together over years of modeling. It is good at it. But ChatGPT is different.

ChatGPT has your entire conversation. Not three words, paragraphs. Context. Follow-up questions. The specific problem you are trying to solve. When someone tells ChatGPT they need a CRM, they run a ten-person sales team, they close around two hundred deals a month, and their budget is tight because they just raised a seed round, that is more targeting signal in a single session than Google has ever had on a single query. That is the structural difference. OpenAI’s internal framing for this is reportedly intent-based monetization. They are not building a display network. They are building the most accurate purchase intent signal the industry has ever produced.

Now should Google actually be worried? Not really, at least not yet. Google can easily integrate this into their AI mode and they are doing exactly that. But the immediate threat is real at the bottom of the funnel. If ChatGPT is capturing commercial intent queries and serving ads against them, that is real revenue that would have gone to Google Search. It is happening at scale right now across eight hundred million weekly users before a single ad dollar has even been sold. Meta is less immediately threatened because their game is audience targeting, not intent targeting. They know who you are and they know what you are likely to want. OpenAI is catching you at the moment of decision. Those are different muscles.

The single biggest risk for OpenAI is not building the product. It is building it in a way that makes users feel like the recommendations are for sale. The entire value proposition of ChatGPT is that it gives you the best answer. The moment someone suspects the best answer goes to the highest bidder, the product is broken. OpenAI knows this. The commitments around labeling, not selling data, and keeping ads below the response rather than inside it suggest they are threading this needle carefully for now. Whether it holds as revenue pressure increases is the real thing to watch.

Now let us get into how this actually works under the hood because the architecture here is genuinely different from anything that has existed before.

In a traditional ad system, whether that is Google Search, Meta, or programmatic display, the flow is stateless. A user sends a signal, an auction runs in milliseconds, a winning ad gets served, and that is it. The ad server does not deeply know what you searched for yesterday or what you will search for tomorrow. It knows this query and this moment. ChatGPT completely breaks that model. The conversation is stateful. Every message builds on the last one. By the time a user asks a product question, the model has potentially seen dozens of messages that tell it who you are, what you are trying to accomplish, and what your constraints are. That context window is the most valuable targeting dataset ever assembled in a single user session, something Google has been estimating and modeling for years but that ChatGPT gets immediately from the conversation itself.

Here is how the conversational ad serving likely works based on what has been reported. First, context processing where the model reads the full conversation thread and builds a real-time intent profile from everything said. Second, intent classification where the system determines whether the conversation has commercial intent and whether the user is researching, comparing, or ready to act. Not every conversation triggers an ad. Third, ad matching where relevant advertisers are paired to the conversation context, including both traditional sponsored placements and the more advanced generative ad format where the AI writes the copy itself. Fourth, response generation where the organic answer comes first and the sponsored content is appended below it clearly labeled. Fifth, feedback where users can dismiss ads and explain why, which trains relevance over time.

The most technically interesting part is what they call generative ads. This is not a static banner or a text link. The AI writes the ad itself in real time based on the advertiser’s product information and the specific conversation context. An advertiser provides features, pricing, and use cases and the model generates copy that speaks directly to what that particular user asked. Someone asking about CRM for a small sales team gets a different pitch than someone asking about CRM for enterprise procurement, even from the same product. That is not possible in any existing ad system at scale. Google’s responsive search ads approximate this but they are still working from static building blocks the advertiser wrote. This is different.

The hardest part and the one nobody is talking about enough is attribution. Every ad business lives and dies on measurement. You have to be able to tell an advertiser their spend worked. In search that flow is relatively clean. User clicked, user converted, here is the data. In a conversational interface the path is much murkier. The user might engage with a sponsored result in ChatGPT, not click, come back three days later in a new session, and then convert through a completely different channel. Which touchpoint gets the credit and how do you assign value to a conversation that influenced a purchase without a direct click? OpenAI is going to need to build new measurement frameworks from scratch. The traditional last-click model does not work here. Until that measurement layer exists and buyers trust it, the CPMs on ChatGPT inventory will be discounted. Not because the intent signal is weak but because marketers cannot prove the ROI in a way their finance teams will accept. That is the real near-term barrier to OpenAI building a massive ad business. Not format. Not user trust. Measurement.

The early signal on pricing tells you everything about what OpenAI is trying to build here. Early beta partners reportedly included Target, Adobe, Ford and Expedia with minimum commitments around $200K and CPMs sitting around $60, which is closer to streaming TV than anything you would see in search. They are not trying to win on volume. They are going after the upfront budget.

So what happens next? OpenAI starts conservative with clearly labeled units at the bottom of responses targeting SaaS and DTC brands where intent is obvious. Within twelve to eighteen months the generative ad format becomes the main product and static placements look like a placeholder by comparison. The measurement problem gets solved through a combination of post-click attribution and advertiser-reported conversion data fed back into the model. OpenAI builds something closer to Amazon’s retail media model than Google’s search model where the conversation, the recommendation, and the transaction all happen in the same place. Google responds aggressively through their own Gemini ad integration because they have the infrastructure, the signals, and the relationships to do it faster.

Here is my honest take on all of this. This is very much needed for OpenAI to stay afloat. The compute costs for eight hundred million users, the graphics cards, the data centers, the energy, it is not a sustainable model without another revenue stream. Paid subscriptions alone will not subsidize a product that is free for seven hundred and sixty million people. Ads are a proven business model. They work. They are probably not the announcement users want to hear but they are the only realistic way to keep this product alive, keep improving it, and keep it accessible to the people who cannot or will not pay.

The setup is there. The users are there. The money is there to be made. The miss here is timing. OpenAI should have started building this in 2024 when Altman was still calling ads a last resort. That hesitation was expensive. Google is not going to sit still. They have the ad infrastructure already built, they have the user data, they have the signals, and they have everything to gain by integrating Gemini properly into their ad stack. OpenAI has the intent signal and the conversational format. Google has everything else. That race is the story of the next two years and we shall see how it plays out.